|

8/6/2023 0 Comments Snappy compression format DerivedColumn1 sink(ĭelta will only read 2 partitions where part_col = 5 and 8 from the target delta store instead of all partitions. You can specify fixed set of values that a partition column may take.ĭelta sink script example with partition pruningĪ sample script is given as below. Only partitions satisfying this condition is fetched from the target store. update/upsert/delete), you can limit the number of partitions that are inspected. With this option under Update method above (i.e. The associated data flow script is: moviesAltered sink( As a result, you may notice fewer partitions and files that are of a larger sizeĪfter any write operation has completed, Spark will automatically execute the OPTIMIZE command to re-organize the data, resulting in more partitions if necessary, for better reading performance in the future When all Update methods are selected a Merge is performed, where rows are inserted/deleted/upserted/updated as per the Row Policies set using a preceding Alter Row transform.Īchieve higher throughput for write operation via optimizing internal shuffle in Spark executors. If your data contains rows of other Row policies, they need to be excluded using a preceding Filter transform. When you select "Allow insert" alone or when you write to a new delta table, the target receives all incoming rows regardless of the Row policies set.

You can leave it as-is and append new rows, overwrite the existing table definition and data with new metadata and data, or keep the existing table structure but first truncate all rows, then insert the new rows. Tells ADF what to do with the target Delta table in your sink.

When a value of 0 or less is specified, the vacuum operation isn't performed. Nameĭeletes files older than the specified duration that is no longer relevant to the current table version. You can edit these properties in the Settings tab. The below table lists the properties supported by a delta sink. Delta source script example source(output(movieId as integer, To import the schema, a data flow debug session must be active, and you must have an existing CDM entity definition file to point to. This allows you to reference the column names and data types specified by the corpus. To get column metadata, click the Import schema button in the Projection tab. If true, an error isn't thrown if no files are foundĭelta is only available as an inline dataset and, by default, doesn't have an associated schema. The container/file system of the delta lakeĬhoose whether the compression completes as quickly as possible or if the resulting file should be optimally compressed.Ĭhoose whether to query an older snapshot of a delta table You can edit these properties in the Source options tab. The below table lists the properties supported by a delta source. Snappy overview Snappy (was SnappyApp) lets you take always-on-top snaps of your screen, annotate, share encrypted with self-destruct, everything neatly organized in your library and synced across your devices.This connector is available as an inline dataset in mapping data flows as both a source and a sink.

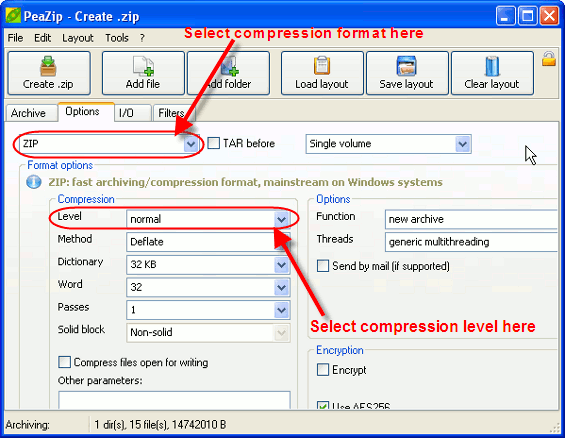

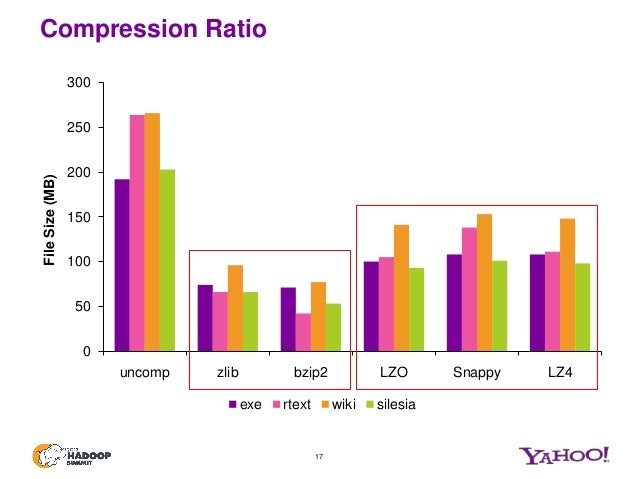

It can be used in open-source projects like MariaDB ColumnStore, Cassandra, Couchbase, Hadoop, LevelDB, MongoDB, RocksDB, Lucene, Spark, and InfluxDB. Snappy is widely used in Google projects like Bigtable, MapReduce and in compressing data for Google’s internal RPC systems. Which compression tool provides the best compression ratio?įor users needing maximum compatibility with recipients using different archive managers, the best choice is ZIP format, in which WinRar and BandiZip provides best compression speeds, even if PeaZip and 7-Zip provides marginally better compression ratio. The name is derived from “tape archive”, as it was originally developed to write data to sequential I/O devices with no file system of their own. In computing, tar is a computer software utility for collecting many files into one archive file, often referred to as a tarball, for distribution or backup purposes. Snappy has previously been called “Zippy” in some Google presentations and the like. What is snappy Java?Ī fast, general-purpose, and lossless compression algorithm Snappy is a compression/decompression library. Snappy does not use inline assembler (except some optimizations) and is portable. Decompression is tested to detect any errors in the compressed stream. Snappy (previously known as Zippy) is a fast data compression and decompression library written in C++ by Google based on ideas from LZ77 and open-sourced in 2011. Snappy-java is a JNI-based wrapper of ” + “Snappy, a fast compresser/decompresser.” byte compressed = Snappy.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed